|

|

|

|

|

|

|

|

|

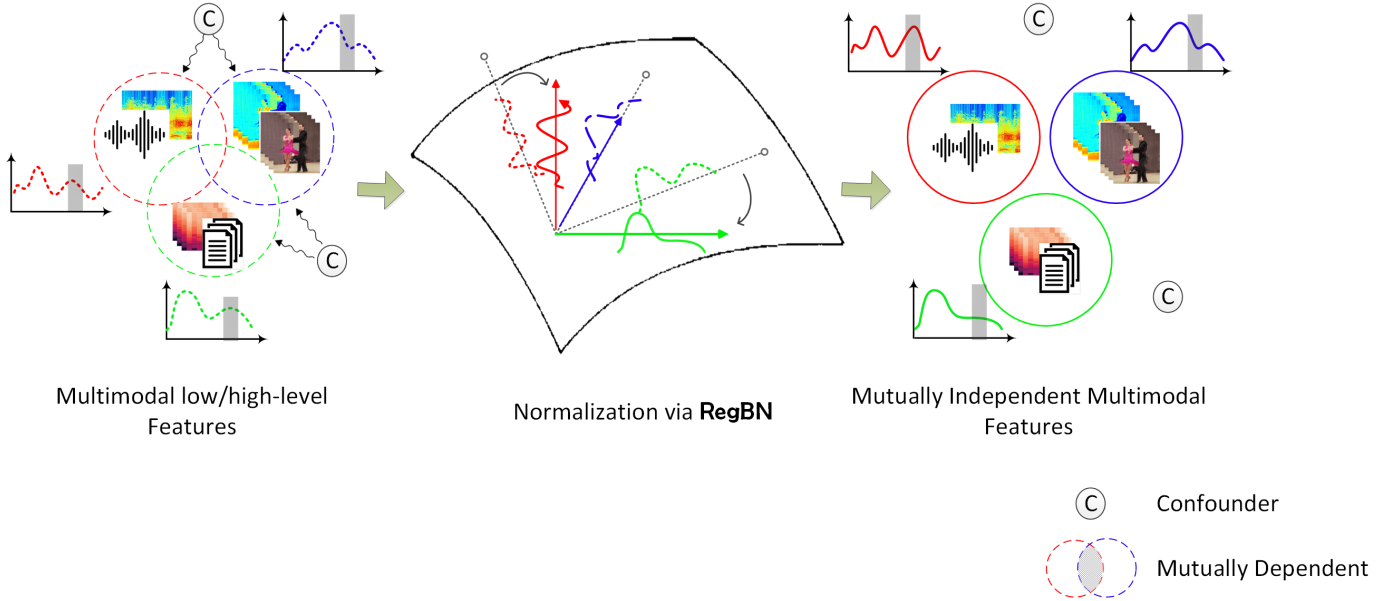

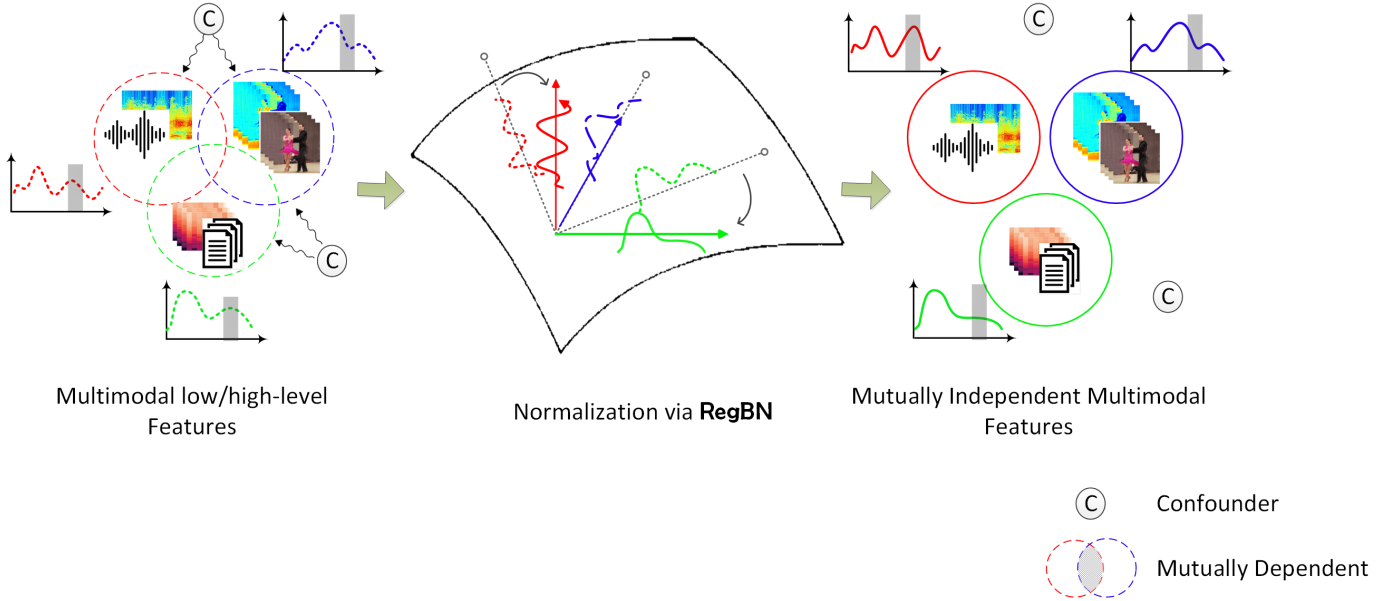

This paper introduces a novel approach for the normalization of multimodal data, called RegBN, that incorporates regularization.

RegBN uses the Frobenius norm as a regularizer term to address the side effects of confounders and underlying

dependencies among different data sources. It enables effective normalization of both low and high-level features in multimodal neural networks.

|

Given a trainable multimodal neural network (e.g., MLPs, CNNs, ViTs) with multimodality

backbones \(A\) and \(B\).

Let \(f^{(l)}\) represent the \(l\)-th layer of network \(A\)

with batch size \(b\) and \(n_1\times...\times n_N\)

features that are flattened into a vector of size \(n\).

In a similar vein, we define \(g^{(k)}\) as the \(k\)-th layer of

network \(B\) with \(m_1\times\ldots\times m_M\) features that are flattened into a vector of size \(m\).

RegBN make \(f^{(l)}\) and \(g^{(k)}\) mutually independent via

$$F(W^{(l,k)},\lambda_{+}) = ||{f^{(l)}-W^{(l,k)} g^{(k)}}||^2_2+\lambda_{+} (||{W^{(l,k)}}||_F-1)$$

Where \(W^{(l,k)}\) is a projection matrix of size \(n\times m\) and

\({\lambda}_{+}\) is a Lagrangian multiplier.

\(W^{(l,k)}\) and \({\lambda}_{+}\) are estimated via an innovative recursive-based algorithm.

Where is it advisable to apply RegBN? |

| In the paper, we reported the performance of RegBN over eight multimodal databases with various modalities, including multimedia, affective computing, robotics, healthcare diagnosis, etc. |

|

MNIST

|

|

Multimedia (MM-IMDb)

|

Acknowledgments |